Beyond payment tokenization: Why developers are choosing Evervault's encryption-first approach

How Evervault’s dual-custody encryption model eliminates the fundamental limitations of traditional tokenization for PCI compliance

Today, the Internet is less secure than it was a decade ago.

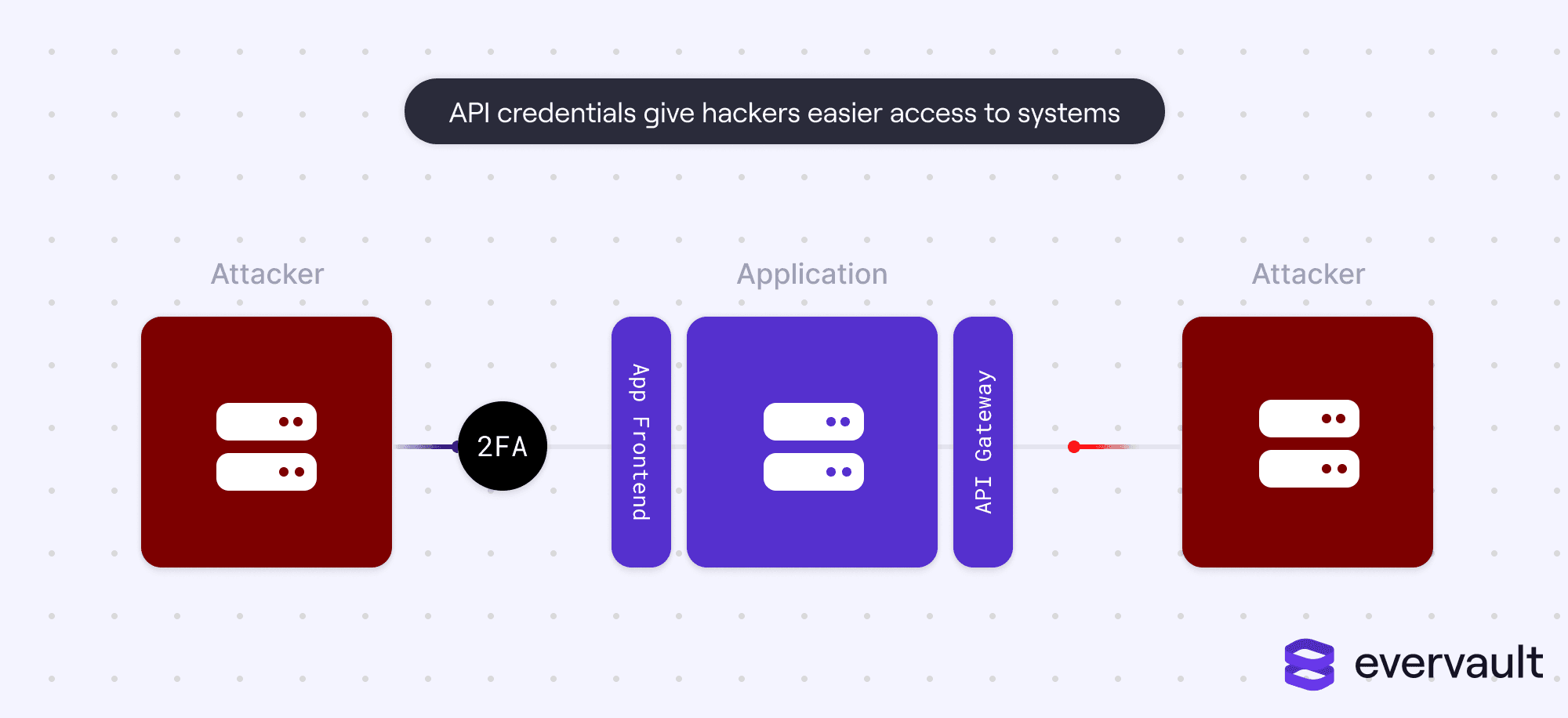

On a surface level, this claim might sound a little ludicrous. After all, there are far more user-facing security measures today. SMS-based 2FA, authenticator apps, and stringent password rules all evolved in the last decade. The login process is becoming increasingly harder, even for ordinary software like baby monitor apps. For many friends, both in and out of the tech world, the web can feel a bit too secure.

But it isn’t. The hacker turf war has simply shifted. Nowadays, credentials are not limited to basic usernames and passwords; instead, most authorization happens in the background via APIs and API Secret Access Keys. Those access keys are like super-passwords. When compromised, they can spell serious disaster, especially because they don’t have safeguards such as human-in-the-loop verification (e.g., 2FA).

In this piece, we’ll touch on all things related to API exploits, including core risk factors, historical examples with real damages, and recommendations on how to best safeguard yourself and your customers from a company-ending data leak.

This article is not a criticism of APIs; it’s a criticism of poor API engineering. APIs are valuable to businesses today. They often power repeatable subprocesses, such as sending SMS notifications or making recommendations, or sometimes niche tasks like importing music catalog data.

For some APIs, the stakes are relatively low risk. Hackers aren’t interested in targeting an API that returns Star Wars location data. (Yes, that’s a real API.) But things get dicey when APIs gatekeep silos of user data. Examples of such APIs include CRM APIs for prospect data, payroll APIs for employee data, and bank APIs for financial data.

Again, these APIs are a good thing. They are critical for products to work well with other products—imagine how much stress CMOs would face if Salesforce didn’t talk to Hubspot. Simply put, integrations enable businesses to craft a software system that works for them without losing data consistency across applications.

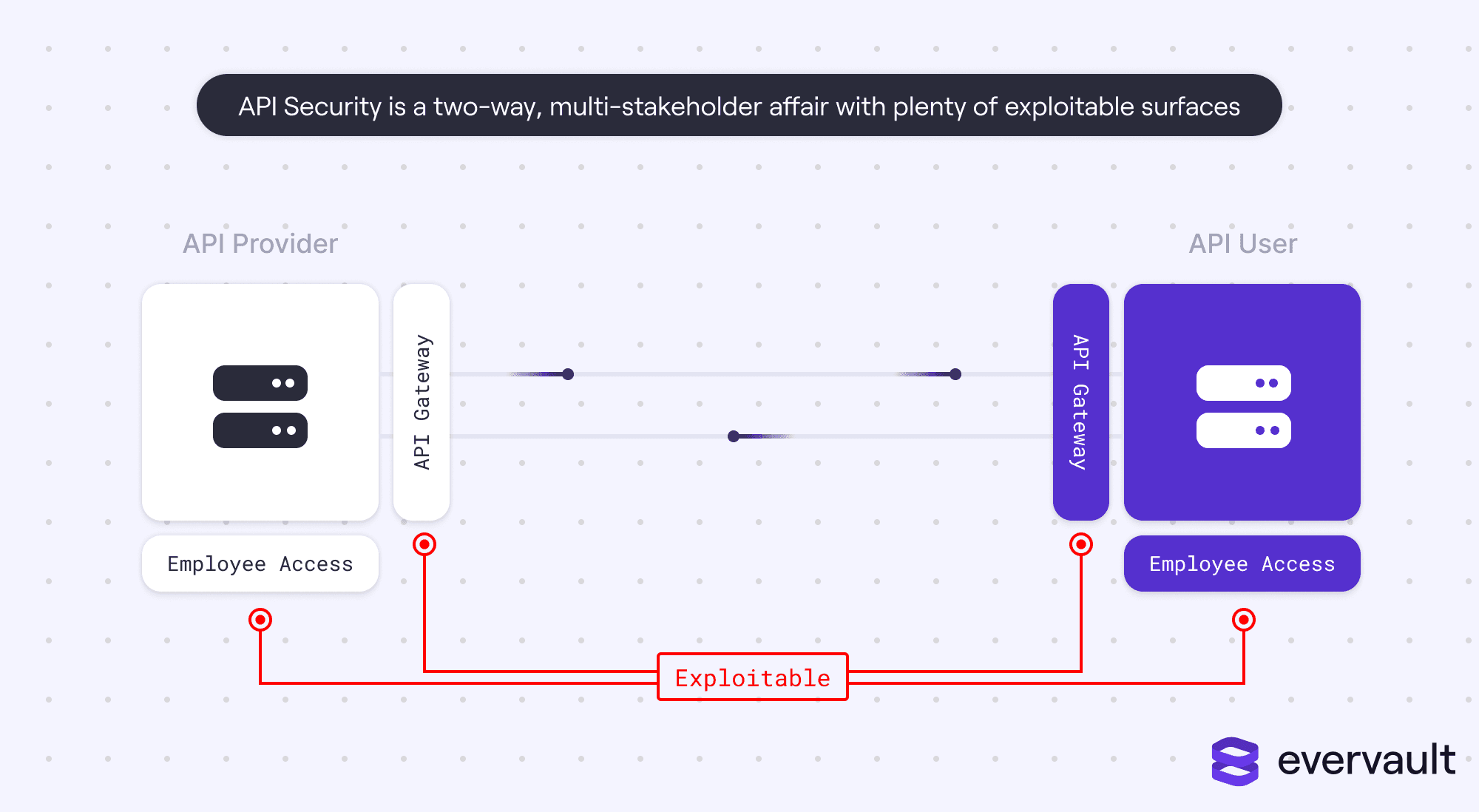

The issue is that API security isn’t a simple checklist of tasks. There are some obvious ones (such as keeping API Access Token encrypted), but even the best practices can render icky edge cases. Notably, API security is a two-way street. API maintainers are often the target of hackers (e.g., Twitter and Twitter API). But so are API users, particularly businesses that enable their end customers to merge data on their behalf.

Integrations are almost as old as the Internet. Software always had to talk to other software. What’s new, however, is standardization.

Standardization, like APIs, isn’t necessarily a bad thing. Old integrations built in the ’90s were often notoriously insecure. Because those integrations had no public docs and were privately built, engineers would trust the anonymity of their design as sufficient security. It’s a story of “It works until it doesn’t”—best illuminated by the Moonlight Maze scandal. In 1996, bespoke integrations between defense contractors and the US Government were infiltrated for over two years. Military troop formations, NASA schematics, and other extremely classified documents were stolen and sold.

Today, the majority of APIs are publicly documented and standardized, with most using a REST paradigm or GraphQL. Standardization is great; it enables engineers to build integrations quickly and sanely. Stripe, in particular, has been lauded for its API design and beautiful documentation. Stripe and other similar commercial APIs release the core routes, credential system, and data schematics to the general public.

One might argue that public API documentation forces good security. Sure. In theory.

In reality, integrations have opened a trove of security issues, some more known than the rest. Because integrations connect multiple applications together, they expose many vectors for attack.

In 2019, Justdial’s API was breached. Theoretically, Justdial’s API had a standard authentication design implementation, with all the necessary bells and whistles to restrict API content to the right users. However, due to engineering mishaps, Justdial’s API would return sensitive user data to anyone that could provide a logged phone number, leaking PII such as names, profile pictures, emails, and dates of birth. In this scenario, user credentials were intact, but data was exploited because of a poorly built API.

Justdial’s breach highlights why API security is a two-way street. While there are plenty of instances where API users have fumbled their keys’ secrecy, security starts with the API’s developer. Sometimes, API bugs don’t lead to actual data leaks; a great example is Salesforce’s 2018 crossed-wires incident where the API wrote real customer data to the wrong accounts. But in the case of Justdial, the damage is real—while they weren’t sued, this incident permanently hurt the company’s reputation.

Sometimes, API security works as intended, but due to poor API design, customer data is made dangerously public.

The best example in recent history is Venmo’s 2018 public transactions fiasco. Venmo’s API, for some bizarre reason, enabled any developer to retrieve the last 20 public transactions. While credit card numbers were protected, other payment details were public—including names, recipients, and payment messages. Soon, developers realized they could compile a massive database of public payments by constantly scraping Venmo; at scale, they could find cases to blackmail individuals that might be using Venmo for illicit transactions based on some serious, some humorous transaction messages. Additionally, hackers could find users’ email addresses and attempt to phish them based on their known spending habits. In response, Venmo shut down the API and relaunched it with better rate limiting. Nevertheless, a researcher still demonstrated in 2019 that the restrictions were garbage.

One of the most perplexing examples of poor API design is Travis CI’s. Travis CI infamously exposes user logs of free accounts. Because many developers (recklessly) commit access tokens and GitHub authentication credentials to repositories, Travis CI is continuously publishing confidential tokens to the web. Is, not was. Even after being criticized for the flaw, Travis CI refused to fix the bug, writing it off as an encouragement for developers to better safeguard their secret tokens. While it’s true that developers should never commit secret tokens to repositories, this response feels lazy. Even when developers follow the best guidelines, accidents happen.

Even if an API is properly secured and designed, it can still be breached if employees are compromised.

Security vendors should not store API keys in plain text—even as the issuer. By encrypting customer keys, hackers have to pass another (massive) hurdle for accessing user accounts, which grants vendors time to cycle keys in case of a breach.

API users need to store their API keys properly to avoid accidentally revealing them to the public.

One of the most recent incidents along these lines happened in 2022 to the entire block of email-sending software. CloudSEK a security research company, discovered that 50% of Google Play apps are leaking Mailchimp, SendGrid, or Mailgun API keys. These API keys correlate to accounts that contain contact details for over 54 million users—anybody that ever received an email from or conversed with any of the email solutions. Even worse, these API keys guard SMTP information, which hackers could use to pull off convincing phishing scams.

Recently, secrets management software solutions like Doppler have exploded in popularity, replacing manually maintained .env files. This is a welcomed evolution; secrets should be safeguarded and never committed to source code, even if the source code is closed source and secret. And, to GitHub’s credit, they recently announced a system to stop developers from accidentally committing API keys to public repositories, but the same protection doesn’t extend to private repositories.

Regardless, secrets management software isn’t a silver bullet. There are cases where companies have leaked their API keys by accidentally packaging them in web request responses; IKEA notably leaked its Salesforce access token, hypothetically giving hackers access to their customer success data (which definitely contains PII). Even more recently, OpenAI users have been notoriously leaking their own OpenAI API keys by bundling them into app binaries.

Sometimes a company’s API keys are leaked due to a compromise of an employee laptop. This often occurs via a phishing scheme; that’s exactly what happened to Dropbox in 2022, in which over 130 of its API credentials were stolen, potentially exposing both Dropbox and vendor data specific to Dropbox.

In other scenarios, a single stolen API key could lead to other API keys being leaked. For instance, in 2022, Imperva’s AWS key was stolen, allowing access to potentially 1,400 other API keys.

While some of these breaches are only solved through careful engineering, a good practice to minimize damage is to routinely cycle API keys. Sometimes, breaches go undetected, and by cycling keys, companies can limit the amount of damage that a hacker could do.

Today, vendors commonly connect user accounts in their systems with another vendor’s system on behalf of users. This typically involves the host vendor application storing a customer-specific access token that can retrieve and push customer data from the integrated application.

Ideally, that access token can only be used in conjunction with the vendor’s API key. That isn’t always the case, however. For instance, if hackers were able to exploit a vendor’s Salesforce integration, they could potentially access every single customer’s Salesforce account. It’s particularly damaging for integrations to data-reach platforms like Salesforce that store PII such as names, emails, and addresses of a customer’s prospects.

A real world example of this is GitHub’s 2022 data breach where attackers stole OAuth tokens that granted GitHub access to applications like Heroku and Travis CI. With these OAuth tokens, hackers were able to access private repositories which could reveal other security holes that could be exploited and poorly-committed secret tokens.

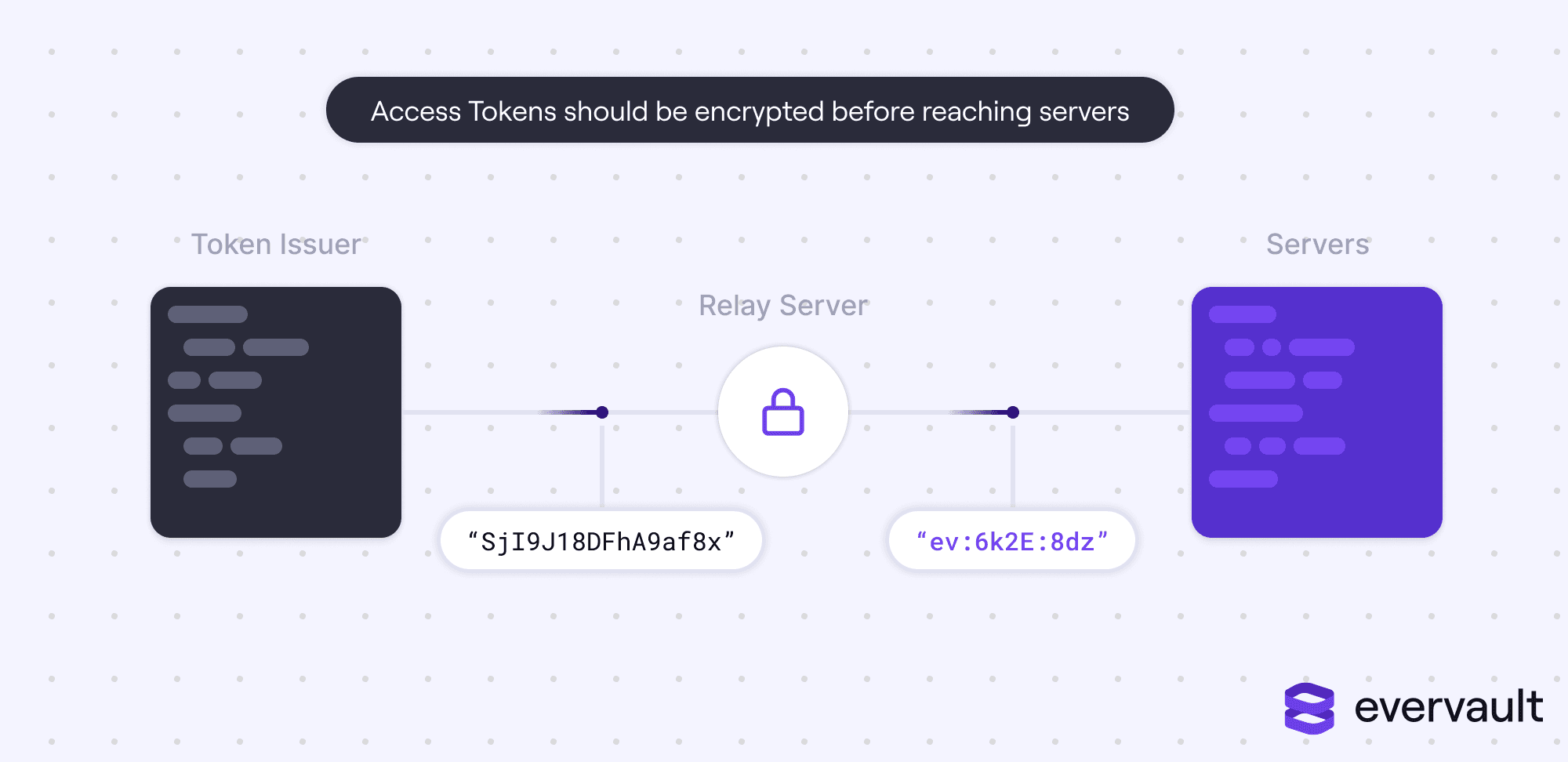

User OAuth access tokens should never be stored in plaintext.

In general, their tokens should never touch servers—this is part of the reason why we built Evervault’s inbound and outbound relay products to safely encrypt and decrypt tokens mid-transit.

While CI/CD systems are a bit more than an integration / API, they raise similar challenges from a security standpoint. Infiltrating a CI/CD is somewhat of a hacker’s holy grail. CI/CD systems govern the process of publishing new developer code to production, setting up a bastion of security woes. CI/CD systems often contain cloud credentials, OAuth tokens, SSH keys, and binary signature certifications.

Hackers have already recognized this. Earlier this year, CircleCI was targeted; hackers installed malware onto an employee’s laptop, gaining access to customer source code and encryption keys that could decrypt sensitive data. Codecov was also targeted through a Docker error message exploit, leaking environmental variables stored in the platform.

By no fault of API users or developers, keys can sometimes be leaked by infrastructure providers. This is exactly what happened to Cloudflare in 2017, when a memory error caused arbitrary HTTP requests and response bodies to be leaked, which could include authentication tokens. The Cloudflare incident wasn’t any customer’s fault—customers trust that their DNS and edge solution doesn’t accidentally leak requests or responses. Unfortunately, cached requests pose a risk of exposing secrets in the case of engineering flaws.

The only way to mitigate a risk like this is to routinely cycle API keys.

As evidenced throughout this piece, there are many ways that hackers access integrations. While this list isn’t exhaustive, nor does it provide air-tight coverage, it details good practices that significantly protect businesses and mitigate damages in the case of a breach.

This is a recommendation that extends to API issuers, API users, and vendors that store API tokens on behalf of customers. By storing only encrypted access tokens and never transmitting plaintext tokens to servers, businesses can dramatically decrease damages in the case of a breach. An encrypted API key is as useless as a random string. This is one of the primary reasons why we built Evervault.

API keys, even when encrypted, should not be committed to repositories. Instead, they should be installed independently. Some businesses automate this process by using tools such as Doppler.

By installing mobile device management (MDM) solutions like Jamf and anti-malware scanning software like CrowdStrike on work laptops, businesses can avoid what happened to CircleCI. Given these tools can be complicated to set up, businesses often use bulk providers like Zip to deploy, configure, and manage these solutions across platforms.

By consistently cycling API keys, businesses prevent attackers from doing significant damage if they do manage to get ahold of a secret key at any point in time.

These proposed solutions are not airtight. Attackers can find ways to slip through the cracks. But by employing all of these strategies, gaining access to production resources is harder. And, in the case of a breach, further exploiting the encrypted data could prove difficult. Sometimes, all you need is to buy yourself time in the case of a breach; API credentials can easily be cycled, and you have significantly more time to safely cycle keys if the stolen keys were encrypted.

Integrations remain a great thing. They dramatically changed how B2B tools are designed, grown, and used. Unfortunately, integrations also introduced a trove of security concerns. Given the long history of breaches with actual consequences, it’s critical for today’s developers to take integration security seriously.

Use Evervault to easily secure your third party API integrations, by fully encrypting customer access tokens at rest and in transit.

Learn More